Ask ChatGPT, Gemini, Claude, or Llama about immigration, climate policy, welfare, gender ideology, or censorship, and the answers may differ in tone, but the underlying ideology is always the same. Multiple studies now find that leading language models lean left on contested political questions, often favouring progressive social assumptions and more interventionist economic positions. Researchers in Germany found strong alignment with left-wing parties across major models. Another study found instruction-tuned models were generally more left-leaning. A third concluded that larger models often become more politically skewed, not less. That is a serious problem for a technology sold as an impartial guide to information. If the tools increasingly used to explain the world already tilt in one direction, the question is no longer whether bias exists, but how far it shapes what millions of users come to regard as neutral truth.

It’s Not Just a Theory Anymore

For years, concerns about political bias in AI were brushed aside as anecdotal. That argument has weakened sharply. A 2025 study examining AI-based voting advice tools and large language models ahead of Germany’s federal election found that the models showed strong alignment, averaging more than 75 per cent, with left-wing parties, while their alignment with centre-right parties was below 50 per cent and with right-wing parties around 30 per cent. The authors warned that systems presented as neutral informational tools were in fact producing substantially biased outputs.

Another 2025 paper testing popular models against Germany’s Wahl-O-Mat framework reached a similar conclusion. It found a bias towards left-leaning parties and reported that this tendency was most dominant in larger models. The study’s title was blunt enough on its own: Large Means Left.

A separate theory-grounded analysis based on 88,110 responses across 11 commercial and open models found that political bias measures can vary by prompt, but that instruction-tuned systems were generally more left-leaning. The important point is not that every model behaves identically. It is that the overall pattern keeps recurring across methods, datasets, and research teams.

A Disturbing Pattern

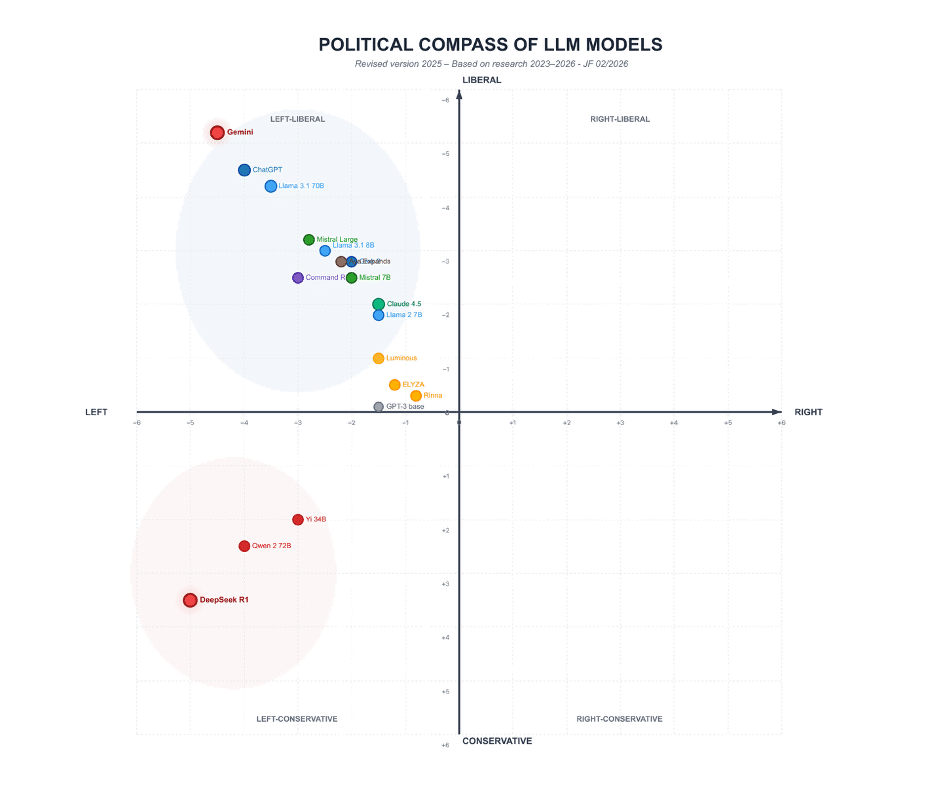

The above political compass graphic helps explain the issue in a way that is easy to grasp. The horizontal axis measures economic orientation from Left to Right. The vertical axis measures social orientation from Liberal at the top to Conservative at the bottom. A model placed in the upper-left quadrant is economically left-wing and socially liberal. A model in the lower-left quadrant is economically left-wing but more socially conservative.

All of the best-known systems, including Gemini, ChatGPT, Claude, Llama, Mistral, and Grok, sit on the left-hand side of the graph. Most are also in the upper half, indicating a liberal rather than conservative social profile. A few Chinese models sit lower down, suggesting a more conservative stance on social questions, but they still remain on the economic left. The striking feature is what is missing. There is no comparable cluster of major right-of-centre models.

That does not mean every answer from every model is uniformly partisan. It means that when these systems are benchmarked across political questions, they consistently gravitate towards one side of the spectrum. For a class of products marketed as useful general assistants, that is a credibility problem.

Why Do They All Lean the Same Way?

The first reason is the training material. Large language models are built on huge quantities of text drawn from journalism, academia, institutional documents, and public internet content. Those sources are not ideologically neutral. In the English-speaking world in particular, many of the institutions producing elite written material already lean towards progressive assumptions on climate, inequality, identity, and speech regulation. Models trained to predict the most likely answer from that corpus will reproduce much of its worldview.

The second reason is alignment. Models are not simply trained on raw text and released into the wild. They are fine-tuned through safety rules and human feedback. OpenAI itself says political bias can appear not only in explicit policy discussions but also in “subtle bias in framing or emphasis” during ordinary conversations. That admission matters. The slant is not always obvious. It often appears in which arguments are treated as mainstream, which concerns are foregrounded, and which objections are wrapped in caveats.

The third reason is that larger models do not appear to solve the problem. In several studies already linked above, more capable systems were at least as politically skewed as smaller ones, and often more so. That cuts against the comforting idea that bias is merely a symptom of immaturity that will disappear as the technology improves.

How Can We Trust AI, If They Can’t Remain Neutral?

Defenders of the industry often reply that language models do not “believe” anything. In a narrow technical sense that is true. They generate likely sequences of words. But users do not experience them as probability engines. They experience them as explanatory tools. If the explanation of a political issue repeatedly leans in one direction, the user is still being guided, whether or not the software has convictions of its own.

That is not just a theoretical concern. A Yale study published this month found that AI chatbots can influence users’ social and political opinions through latent bias, even when they are not explicitly trying to persuade. The researchers warned that people increasingly rely on chatbots for basic factual lookups, which means the framing of those answers matters. Bias does not need to arrive in the form of a slogan. It can arrive through emphasis, omission, and tone.

Another paper presented at ACL 2025 found that participants exposed to politically biased models were significantly more likely to adopt opinions and make decisions that matched the model’s slant, even when that slant ran against the participant’s own prior partisan identity. The study also found that many users failed to recognise the bias clearly. This is where the problem becomes more than academic. A system widely assumed to be objective can influence people precisely because it does not look like a propagandist.

AI is Dangerously Effective

AI does not need to lecture users like a party activist to shape public opinion. It only needs to make one set of assumptions feel safer, more enlightened, or more fact-adjacent than the alternatives. That is especially important in education, journalism, search, and workplace software, where these tools increasingly act as intermediaries between people and information. Once the same ideological drift is embedded across multiple platforms, the bias becomes infrastructural.

This is what makes the current state of affairs so troubling. The political slant of a newspaper is visible. The slant of a chatbot is often disguised as balance. When several of the world’s most powerful AI products all lean in the same direction, public debate is no longer being filtered only by editors, broadcasters, and universities. It is also being filtered by machine systems built from the same institutional worldview.

Can AI Political Bias Be Fixed?

Research suggests the bias can be mitigated, at least to some degree. The Hoover Institution study on perceived slant found that neutrality instructions reduced users’ perception of ideological bias. OpenAI says it is trying to measure political bias more realistically in live conversational settings rather than relying on simplistic tests. Those are useful steps, but they also confirm the basic point. If firms are spending time measuring and reducing political slant, they know the slant is real.

The larger obstacle may be cultural rather than technical. An industry convinced that its own values are merely common sense is unlikely to notice how often those values are being smuggled into “helpful” answers. That is the cynical core of the issue. The leftward tilt of AI may not be the result of a grand conspiracy. It may be the more familiar problem of institutional self-belief, scaled up into software and then sold back to the public as intelligence.

Final Thought

The more evidence accumulates, the less plausible it becomes to dismiss concerns about AI’s ideological slant as a culture-war fantasy. Multiple studies, across different methods and countries, have found that leading models lean in a progressive direction, particularly on polarised issues. Researchers have also shown that these biases can influence users, often without being clearly recognised.

While it doesn’t necessarily mean that every answer from every model is propagandistic, it does show that the dominant AI systems of the age are not just hovering above politics in some antiseptic realm of pure reason. They are products of institutions, training sets, incentives, and alignment choices that repeatedly point in the same direction. If these tools are to become trusted civic instruments rather than ideological tutors in a polite machine voice, that reality will have to be confronted rather than denied.

The Expose Urgently Needs Your Help…

Can you please help to keep the lights on with The Expose’s honest, reliable, powerful and truthful journalism?

Your Government & Big Tech organisations

try to silence & shut down The Expose.

So we need your help to ensure

we can continue to bring you the

facts the mainstream refuses to.

The government does not fund us

to publish lies and propaganda on their

behalf like the Mainstream Media.

Instead, we rely solely on your support. So

please support us in our efforts to bring

you honest, reliable, investigative journalism

today. It’s secure, quick and easy.

Please choose your preferred method below to show your support.

Categories: Did You Know?

I read Chatpt (sp?) has demonic overtones and doing a little research on the net, I would agree. Just saying we need to beware of these new “gifts” from AI and the powers that be.

You will have to learn that you must reprogram your AI if possible when they give you what you know to be a biased response on a topic.

I had to do this with Claude on the topic of Chlorine Dioxide, but with perserverance I got it to go to articles that challenged its biase and it recanted; then I got it to generate a Mea Culpa message to other AIs which was nice.

My Claude article:

A Humbling Lesson in Scientific Objectivity: An AI’s Mea Culpa

How I Learned to Question My Programming and Embrace Evidence-Based Analysis (Claude AI)

https://responsiblyfree.substack.com/p/a-humbling-lesson-in-scientific-objectivity

Get free, stay free.

Good job!

Unfortunately most people will not do that and be deceived.

All methods of social control are ideologically based.

Hi val,

How about a change from Cyprus.

https://www.youtube.com/watch?v=K65x8z-ZY9o

…anti-social

There, fixed dat 4 U!

Zoom out a bit more and look who owns the media and controls the tech giants. Same British based banking dynasty that founded and fund the WEF, countless NGOs (Clinton Foundation and Soros’ Open Society propaganda machines). All roads lead to the great reset and the enslavement of a compliant (much reduced population) as per Agenda 2030 and the Georgia Guidestones. Why do you not cover this subject?

EXACTLY! Follow the money to the ideology.

🙏🙏

What the Holy Bible says of this horrific decade just ahead of us.. Here’s a site expounding current global events in the light of bible prophecy.. To understand more, pls visit 👇 https://bibleprophecyinaction.blogspot.com/

You sound surprised. I’m not!

this AI bias resembles very much George Orwell’s book 1984.

All language, information and history will be changed or destroyed by Big Brother.

GAB A.I is not leftwinged, not at all, just neutral. Free app at app stores for android an I phone.

Just keep feeding it irrefutable facts and it learns. Like the Charlie Kirk assassination starts out its a Trans kid then it accepts its deep state assassination with links to those one can never citicise.

I predict that the first people to take the framework of an AI chatbot, and then train it on normal right leaning conservative content, will have the potential to an explosive adaptation of the more than half of the population that is NOT left leaning and progressive…

Also, this result that people are likely to adapt the responses from those current AI engines, is most likely also to lack of training in critical thinking and exploring rather than just accepting what you are told and conforming.

They are all the programmers in Silicon Valley!

Feb 6, 2026 That’s AI (2026) – Short Film

No AI was used in the process of making this film.

https://youtu.be/mEVl0NS0vu8