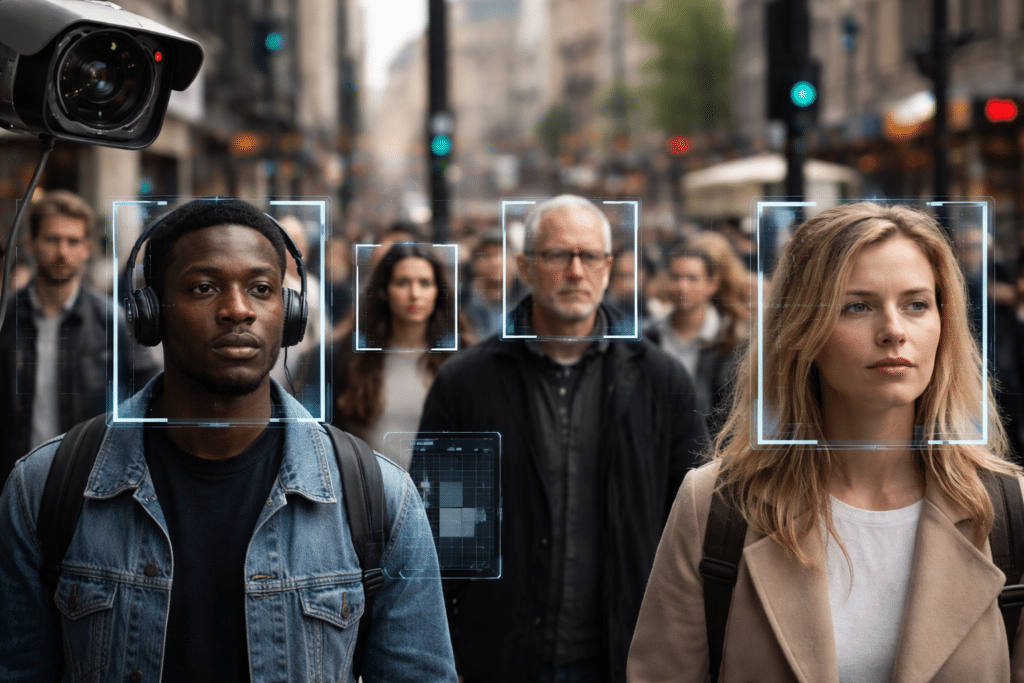

Essex Police has paused its use of live facial recognition after a Cambridge study found the system was statistically more likely to correctly identify black people than other ethnic groups, and more likely to identify men than women. The force also flaunted worrying figures: around 1.3 million faces were scanned between August 2024 and February 2025, producing 48 arrests and “only one mistaken intervention.” That is being presented as reassurance. It should be read as evidence of how quickly mass biometric monitoring is being normalised in Britain. Millions of people were scanned in public before the public had any clear answer to a basic question: what level of proof was ever produced to justify deploying this in the first place?

Police Admits Facial Recognition Does Not Reduce Crime

Essex’s own commissioned report also found “there was no statistically significant evidence that LFR (live facial recognition) deployments reduced crime” and that “crime levels were similar before, during and after deployments”. The report said the main impact appeared to be the identification of specific individuals, rather than any measurable deterrent effect on offending in the surrounding area.

That is awkward for a technology so often sold as a public-safety necessity. If the broader deterrent effect cannot be demonstrated, then the justification narrows considerably. The public is left with a system that scans enormous numbers of people, produces a modest number of interventions, and has not shown clear evidence of making the wider area safer. That is a much thinner case than ministers and police press releases usually imply.

It’s Not Just Essex: Facial Recognition is Rolling Out Nationwide

By the end of 2025, thirteen police forces in England and Wales were using live facial recognition, according to Sky News. In January, the government said the number of facial-recognition vans would increase from 10 to 50. The Home Office has also cited more than 1,300 arrests in London between January 2024 and September 2025 linked to the technology. These figures are often presented as evidence of success, but they are also evidence of quiet expansion.

This is why the Essex pause should not be mistaken for restraint. National policy is moving in the opposite direction. The state is not stepping back from facial recognition; it is embedding it more deeply. Once that infrastructure becomes ordinary, opposition is pushed onto narrower ground: not whether the public should be scanned, but only whether the scanning is sufficiently balanced, proportionate, and accurate. That is a major shift in what citizens are expected to tolerate.

Accuracy Figures Are Troubling, Not Reassuring

For anyone already uneasy about facial recognition, the phrase “only one mistaken intervention” does not sound reassuring. It suggests a model of surveillance that is smooth enough to sustain itself politically. Essex’s own figures show around 1.3 million faces were scanned over 41 deployments, leading to 123 interventions and 48 arrests by police. That is one arrest for roughly every 27,000 faces scanned. The ratio tells its own story: a large public is subjected to biometric scrutiny so that a small number of targets can be located.

There is also a wider record here. NIST has previously found that facial-recognition algorithms can show substantial demographic differentials, with false positives often varying by factors of 10 to more than 100 across groups in some contexts. It reported higher false-positive rates for women, African Americans, and particularly African American women in one-to-many search systems, precisely the sort of setting that raises the risk of false accusation or added surveillance. Essex’s findings do not emerge in a vacuum. They sit within a longer history of uneven performance and civil-liberties concern.

Final Thought

Essex police has paused live facial recognition because the bias issue became exposed, not because the underlying power is too intrusive. Yet the same report that flagged fairness concerns also found no statistically significant short-term crime reduction. If facial recognition scans millions, delivers limited arrests, and cannot show it actually reduces crime, what exactly are we being asked to accept it for?

The Expose Urgently Needs Your Help…

Can you please help to keep the lights on with The Expose’s honest, reliable, powerful and truthful journalism?

Your Government & Big Tech organisations

try to silence & shut down The Expose.

So we need your help to ensure

we can continue to bring you the

facts the mainstream refuses to.

The government does not fund us

to publish lies and propaganda on their

behalf like the Mainstream Media.

Instead, we rely solely on your support. So

please support us in our efforts to bring

you honest, reliable, investigative journalism

today. It’s secure, quick and easy.

Please choose your preferred method below to show your support.

Categories: UK News

Hi G Calder,

So when a system works they stop using it.

Sounds about right.

How about using the police to track down the benefit fraud the boat people are getting away with.

I read about £50 million with just one family.

How could this happen under our noses.

https://www.youtube.com/watch?v=D9uAkUdTwbA

How about the police look into grooming from 30 years ago.

I have noticed lots of neurological damage since the convid jabs rolled out. People have lost their minds and are irrational.. of course they don’t realise because they have lost their minds, but the insanity of the world is clear to the jab free….

🙏🙏

What the Holy Bible says of this horrific decade just ahead of us.. Here’s a site expounding current global events in the light of bible prophecy.. To understand more, pls visit 👇 https://bibleprophecyinaction.blogspot.com/

Hmm,

“Essex Police has paused its use of live facial recognition after a Cambridge study found the system was statistically more likely to correctly identify black people than other ethnic groups, and more likely to identify men than women.”

In the USA, FBI crime statistics show blacks commit over 50% of the violent crimes, at just 13% of the total population with approx. 6% being men. The same proportion likely exists in Britainistan.

If it works well on black men? Keep it!

https://stock.periscopefilm.com/gg44475-mission-mind-control-mkultra-program-cia-lsd-u-s-military-brain-washing-studies/

https://stock.periscopefilm.com/10494-athabasca-tar-sands-oil-sands-1967-bechtel-corp-promo-film-alberta-canada/ not once is it stated that the oil belongs to the people

https://stock.periscopefilm.com/xd11634-the-concept-1951-march-of-dimes-promo-film-polio-disease-vaccine-research/

Facial recognition scanning is a violation of right to privacy.

It makes me not want to go to public areas.

Lol so it worked really well just that it was the wrong colour…

A pity they can’t use it to find decent hard working police officers that tackle real crime..

Welcome to the image of the beast technology.